By Liu Yunhao (China Computer Federation)

In an ordinary community in Shenzhen, an AI recognition system is at work. It captures both visual and audio information simultaneously and identifies anomalies—such as window-breaking sounds, screams, and suspicious figures—in just 0.8 seconds, with an accuracy rate of 96.5%.

What breaks international records is not the figure itself, but how this 25-year-old young engineer turned a scientific research idea into a product that changes the real world. Deployed in 10 communities, adopted by 50 manufacturing enterprises and 72 Internet application companies, the technology is valued at 528 million yuan. In just four years, Zhong Yang has accomplished what most AI researchers never achieve in a lifetime—turning academic papers into industrial applications.

The story began with a simple question. In 2020, Zhong Yang, then a postgraduate student, was asked by his supervisor: "Can you design a system that achieves paper-level precision at product-level cost?"

The reality at that time was grim. Traditional AI recognition systems operated on a single dimension—pure vision was easily confused by light and occlusion, while pure audio was disturbed by ambient noise. During community visits, Zhong Yang found that "cameras trigger 100 false alarms every day, but only 3 are real issues." Meanwhile, deploying international advanced models cost more than 1.5 million yuan—far beyond the security budget of most communities.

A huge gap lay between academia and industry.

Zhong Yang's breakthrough came from three seemingly "unconventional" decisions:

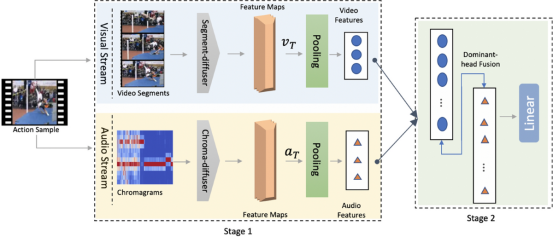

1. Multimodal Fusion

While all competitors focused on single-dimension optimization, he chose integration. He designed a joint inference framework: the visual module identifies people and behaviors, the audio module detects abnormal sounds, and the fusion module features his self-developed "confidence scoring system." The system automatically adjusts weights in different scenarios—70% visual weight in bright daylight, and increased audio weight at night.

"3,000 lines of core algorithm code solved this problem," Zhong Yang said. He later applied for Chinese and international patents, pushing accuracy from 75% to 96.5%.

2. Edge Computing

The international practice was to upload data to the cloud. Zhong Yang took the opposite approach—running the model directly on local servers. Data never leaves the community, ensuring 100% privacy protection; response time dropped from 3 seconds to 0.8 seconds. The trade-off was an extremely compact model.

Using knowledge distillation, he did something "crazy": compressing a 500MB large model to just 50MB. "It’s like condensing the knowledge of a library into a single book," he explained. "Retain the essence, remove redundancy." The result: a 10x smaller model, 8x faster speed, with accuracy only dropping slightly from 96.5% to 96.2%.

3. Lightweight Hardware

The industry relied on top-tier GPUs and professional equipment, costing over 1.5 million yuan. Zhong Yang used industrial Raspberry Pi, ordinary cameras, and ultrasonic microphones, with a total cost of only 60,000 yuan.

"For a 100-unit community, the traditional solution costs 15 million yuan, while mine costs 6 million yuan—a 60% cost reduction. All hardware is universal; property managers without AI knowledge can replace cameras themselves," he said.

The common logic behind the three decisions: optimize based on industrial constraints, not design under ideal conditions. This approach set Zhong Yang apart from international peers from the very beginning.

In June 2021, the system gained its first real user—Yunshanju Community. With over 2,000 households, the community recorded 3–4 burglary cases monthly. Manager Li, the community property director, was skeptical about AI.

Instead of explaining technical details, Zhong Yang conducted a simple test: installed 12 cameras and microphones, then "disappeared" to let Manager Li verify the results himself. A week later, feedback arrived—false alarm rate dropped from 15% to 3%, with zero missed real anomalies.

More importantly, the system successfully prevented a burglary. When a suspect tried to climb in through the balcony, the system detected the combination of a suspicious figure and window-breaking sound and alerted police immediately; security guards arrived within 5 seconds. Manager Li became its biggest advocate, recommending it to other communities.

By the end of 2023, the system was deployed in 10 communities covering 25,000 households, preventing 47 thefts. Based on insurance payouts, it has created 94 million yuan in value.

One success sparked more possibilities. Zhong Yang realized the logic of multimodal fusion was universal—applicable to any problem requiring multi-dimensional judgment.

Industrial quality inspection became the second battlefield. Manual visual inspection on production lines was inefficient. Zhong Yang developed a second system: high-resolution cameras detect appearance defects, while special microphones identify physical flaws like crack sounds. The fusion module makes comprehensive judgments.

The result: 94% detection accuracy, speed increased from 3 to 45 items per minute—15x efficiency, with only 20% cost growth. Adopted by 50 manufacturers, including mobile phone makers and auto parts suppliers, it brought an average 80% efficiency improvement and 35% cost reduction. Each enterprise saves 5–15 million yuan annually, with total economic benefits exceeding 500 million yuan.

The third application was text sentiment analysis for e-commerce platforms to understand user reviews. A comment like "this product is okay" is ambiguous on its own, but combined with purchase history, social media tendencies, and aggregated reviews, AI can interpret it as "satisfied overall despite minor flaws."

Zhong Yang optimized the Transformer model: 40% fewer parameters, 6x faster processing speed, and 3% higher accuracy. Adopted by 72 enterprises, it processes over 100 million text entries daily.

From communities to factories and the Internet, one core idea—multimodal fusion—created diverse value across three industrial scenarios, with total economic benefits of about 2 billion yuan.

In 2021, Zhong Yang published his first paper in a top international journal: Multimodal Fusion for Anomaly Detection: A Lightweight Edge Computing Approach, cited 143 times. But he called this "just the tip of the iceberg."

The paper described a general framework. Real innovation lay beyond it. The model achieved 99% accuracy on standard datasets, but only 71% in real communities. He spent six months collecting real-world data, retraining the model, and adding boundary condition processing, finally reaching 96.5% accuracy.

"Those six months of work will never be written into academic papers. Papers require universality and reproducibility; industrialization demands pertinence and robustness," Zhong Yang said.

In 2024, he was unanimously elected as a member of the Expert Committee of Shenzhen CIO Association—an organization equivalent to IEEE Senior Member, with a selection rate of less than 1%. He also serves as a visiting professor at several universities but chose not to pursue a PhD. "If a technology only helps publish papers but not society, it is meaningless," he stated.

MIT’s AI Lab invited him as a visiting researcher. "Their first question was: how did you achieve such low cost?" Their system cost 2 million US dollars, while Zhong Yang’s cost only 400,000 yuan—1/30 of the cost. The key difference was mindset: international teams pursued "state-of-the-art algorithms + best hardware," passing costs to the market; Zhong Yang started from industrial constraints. "I knew from the beginning the product would be used in middle-class Chinese communities, with no 2-million-yuan budget."

Zhong Yang also faced failures. In 2021, he tried applying the system to factory inspection robots. Factory noise crippled his audio system, and oil pollution severely reduced visual accuracy. "I failed after six months, which taught me not all scenarios suit multimodal fusion," he said.

As applications expanded, he prioritized privacy. A critical decision: all videos are deleted immediately after local processing; the system only retains the conclusion "an anomaly occurred." "This adds complexity and cost, but I don’t want a system that invades privacy," he said.

To young researchers torn between academia and entrepreneurship, Zhong Yang advised: "It’s not an either-or choice. My success comes from 40% academic accumulation, 40% industrial practice, and 20% luck. Academia teaches you how to think; industry teaches you how to act. Both are needed."

His office has no honors—no awards, certificates, or trophies. Only photos from communities, factories, and users: parents enjoying safe communities, workers giving thumbs-up, five-star user reviews.

"These are my greatest honors," he said.

His story is just beginning. The next goal is "full multimodality"—adding thermal, pressure, and odor sensors beyond vision and audio, shifting from passive recognition to active early warning. But he noted this may take 5–10 years, and each additional sensor brings more privacy risks. "So I will be very cautious."

At 25, this engineer has turned a research idea into a real product in four years, applied it to real scenarios, created tangible value, and earned recognition in both academia and industry.

This is not just personal success—it epitomizes China’s AI industrialization. In international competition, our advantage lies not in being smarter, but in optimizing under constraints, understanding real industrial needs deeply, and compromising technical "perfection" for user value.

Zhong Yang represents a new generation of innovators. They pursue practicality over novelty in papers, balance across dimensions over single-dimension extremes, and localized innovation over international benchmarks.

Such innovators will ultimately change the world.

United News - unews.co.za